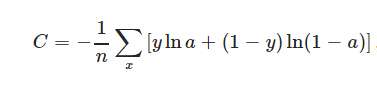

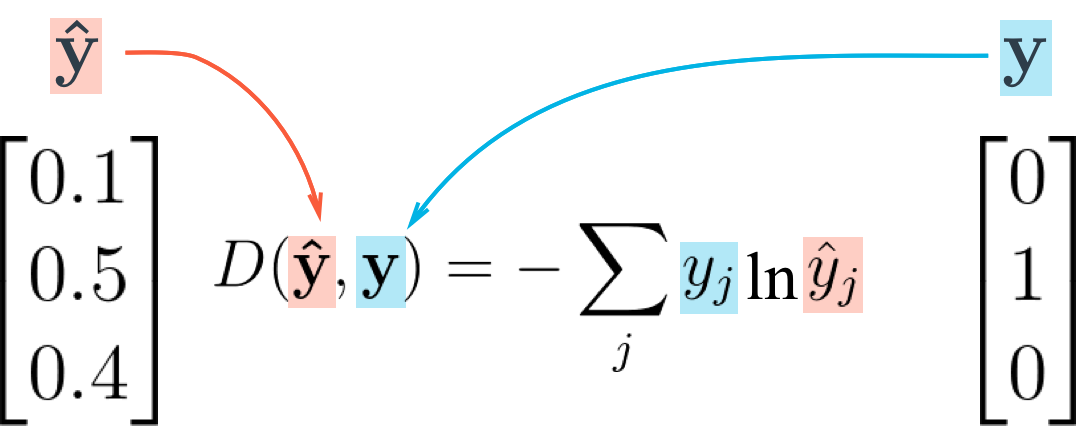

In instance selection algorithms, such as compression methods and noise filters , outliers can be detected and excluded from datasets. New loss measures decrease the influence that large residuals may have on the whole training process. Basic methods to make the network more robust to outlying data points usually involve modified error functions. Training neural networks in the presence of noise or outliers was very often considered for regression tasks . The loss is based on the idea of robust trimmed estimators applied to well-known categorical cross-entropy error.

Without changing the network architecture or learning algorithm we apply modified loss as new error criterion. In this Letter, we propose a simple way to make the training process more robust to noisy data. Even if the data labels are generated based on data mining algorithms, or web search engines, they still suffer from label noise. Some of the large datasets are publicly available , but very often one needs to prepare training data manually, which can be expensive and time-consuming process. However, these impressive results could be obtained only with well-annotated huge data collections. Such networks became popular mainly due to their top performance in many real-life problems.